LLMs are Hungry– For Failed Companies’ Slack Chats

Customer privacy concerns are top priority. But what about employees?

Add bookmark

Regardless of one's sentiment surrounding AI, we may soon not have a say in whether we're training it. Startups like SimpleClosure buy and sell shuttered companies' data through an "Asset Hub," and this data is being used to train AI models. But, this can be sensitive employee data. In their own words, "You worked hard on what you built. Even if the company didn't work out, that doesn't mean what you created has no value. We know it does—and we built a platform to prove it." Between options for failed startup founders to license code to "vetted buyers" and workplace data sales in beta, SimpleClosure aims to "honor" failed companies by letting their "legacy live on." Potentially an admirable sentiment, but workplace data and code are then being used not by humans, but by AI to create "reinforcement learning gyms," or RL gyms, to train AI models, allowing them to "practice" various tasks and requests.

According to The Information, leaders at Anthropic discussed spending upwards of $1 billion on RL gyms. This brings up further concerns surrounding the scale at which this workplace data is being fed to AI models. SimpleClosure has handled upwards of 100 deals, some reaching six figures. Are Slack messages from failed organizations the kind of data we want to be training models on? AI expert Brian Roemmele shared a post on X, likening the training of LLMs with Slack and email workplace chatter to feeding cows cardboard and expecting their milk to be of higher quality. He goes on to say that models fed with this data will "inherit the worst habits of dysfunctional office culture instead of first-principles reasoning."

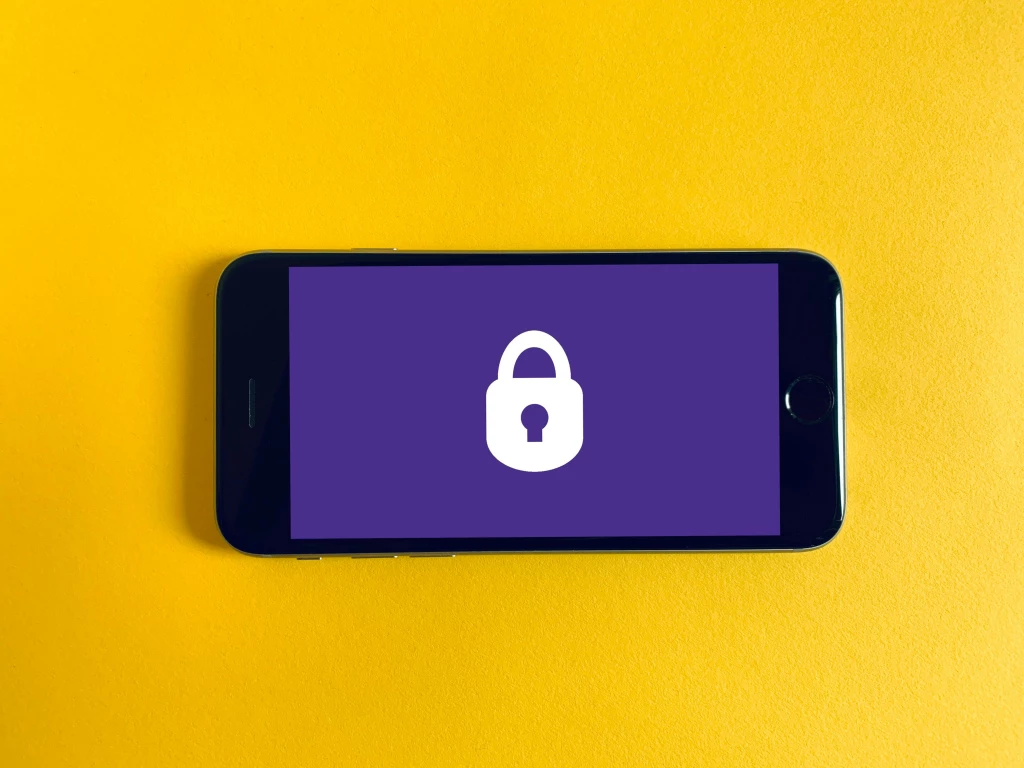

It's not just SimpleClosure that's figuring out the line between whose data belongs to whom. Slack, bought by Salesforce in 2021, became AI-powered in January 2026. Their methods of training their AI tools, such as their built-in personal assistant, "Slackbot," have come under fire recently. Users of the workplace communication platform are automatically opted in to train AI. If you aren't interested in helping train, however, you're going to have to send an email to opt out. The technology behind Slack's AI bot is powerful, using internal company data to understand relationships between employees and organizing and tailoring messages accordingly. Slack claims to be adamant about security, however.

Screenshot from https://slack.com/trust/data-management/privacy-principles

Customer privacy concerns are often top of mind in the CX world and beyond. But how do we treat employee privacy? Founder of the Center for AI and Digital Policy, Marc Rotenburg, said, "Employee privacy remains a key concern, particularly because people have become so dependent on these new internal messaging tools like Slack…It's not generic data. It's identifiable people." It's no question that employee sentiment trickles down to customer sentiment, and if employees don't feel protected and valued, neither will customers. Regardless of loyalty to a company, employees deserve transparency when it comes to how their data is used– even if the company isn't ultimately successful. Employees' value should be expressed, in word, yes, but more importantly, in action.

Image credit to Mikhail Nilov via Pexels.